Customize the scheduler

Karmada ships with a default scheduler that is described here. If the default scheduler does not suit your needs you can implement your own scheduler.

Karmada's Scheduler Framework is similar to Kubernetes, but unlike K8s, Karmada needs to deploy applications to a group of clusters instead of nodes. According to the placement field of the user's scheduling policy and the internal scheduling plug-in algorithm, the user's application will be deployed to the desired cluster group.

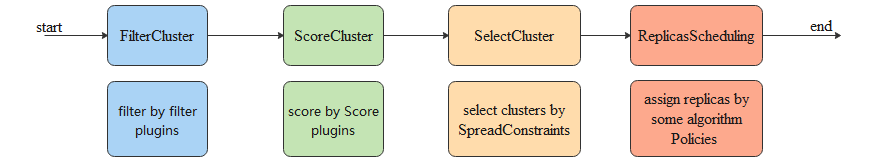

The scheduling process can be divided into the following four steps:

Predicate: filter inappropriate clusters

Priority: score the cluster

SelectClusters: select cluster groups based on cluster scores and

SpreadConstraintReplicaScheduling: deploy the application replicas on the selected cluster group according to the configured replica scheduling policy

Among them, the plug-ins for filtering and scoring can be customized and configured based on the scheduler framework.

The default scheduler has several in-tree plugins:

- APIEnablement: a plugin that checks if the API(CRD) of the resource is installed in the target cluster.

- TaintToleration: a plugin that checks if a propagation policy tolerates a cluster's taints.

- ClusterAffinity: a plugin that checks if a resource selector matches the cluster label.

- SpreadConstraint: a plugin that checks if spread property in the Cluster.Spec.

- ClusterLocality: a score plugin that favors cluster that already have the resource.

- ClusterEviction: a plugin that checks if the target cluster is in the

GracefulEvictionTasks, which means it is in the process of eviction.

You can customize your out-of-tree plugins according to your own scenario, and implement your scheduler through Karmada's Scheduler Framework.

This document will give a detailed description of how to customize a Karmada scheduler.

Before you begin

You need to have a Karmada control plane. To start up Karmada, you can refer to here.

If you just want to try Karmada, we recommend building a development environment by hack/local-up-karmada.sh.

git clone https://github.com/karmada-io/karmada

cd karmada

hack/local-up-karmada.sh

Deploy a plugin

Assume you want to deploy a new filter plugin named TestFilter. You can refer to the karmada-scheduler implementation in pkg/scheduler/framework/plugins in the Karmada source directory.

The code directory after development is similar to:

.

├── apienablement

├── clusteraffinity

├── clustereviction

├── clusterlocality

├── spreadconstraint

├── tainttoleration

├── testfilter

│ ├── test_filter.go

The content of the test_filter.go file is as follows, and the specific filtering logic implementation is hidden.

package testfilter

import (

"context"

clusterv1alpha1 "github.com/karmada-io/karmada/pkg/apis/cluster/v1alpha1"

policyv1alpha1 "github.com/karmada-io/karmada/pkg/apis/policy/v1alpha1"

workv1alpha2 "github.com/karmada-io/karmada/pkg/apis/work/v1alpha2"

"github.com/karmada-io/karmada/pkg/scheduler/framework"

)

const (

// Name is the name of the plugin used in the plugin registry and configurations.

Name = "TestFilter"

)

type TestFilter struct{}

var _ framework.FilterPlugin = &TestFilter{}

// New instantiates the TestFilter plugin.

func New() (framework.Plugin, error) {

return &TestFilter{}, nil

}

// Name returns the plugin name.

func (p *TestFilter) Name() string {

return Name

}

// Filter implements the filtering logic of the TestFilter plugin.

func (p *TestFilter) Filter(ctx context.Context,

bindingSpec *workv1alpha2.ResourceBindingSpec, bindingStatus *workv1alpha2.ResourceBindingStatus, cluster *clusterv1alpha1.Cluster) *framework.Result {

// implementation

return framework.NewResult(framework.Success)

}

For a filter plugin, you must implement framework.FilterPlugin interface. And for a score plugin, you must implement framework.ScorePlugin interface.

Register the plugin

Edit the cmd/scheduler/main.go:

package main

import (

"os"

"k8s.io/component-base/cli"

_ "k8s.io/component-base/logs/json/register" // for JSON log format registration

controllerruntime "sigs.k8s.io/controller-runtime"

_ "sigs.k8s.io/controller-runtime/pkg/metrics"

"github.com/karmada-io/karmada/cmd/scheduler/app"

"github.com/karmada-io/karmada/pkg/scheduler/framework/plugins/testfilter"

)

func main() {

stopChan := controllerruntime.SetupSignalHandler().Done()

command := app.NewSchedulerCommand(stopChan, app.WithPlugin(testfilter.Name, testfilter.New))

code := cli.Run(command)

os.Exit(code)

}

To register the plugin, you need to pass in the plugin configuration in the NewSchedulerCommand function.

Package the scheduler

After you register the plugin, you need to package your scheduler binary into a container image.

cd karmada

export VERSION=## Your Image Tag

make image-karmada-scheduler

$ kubectl --kubeconfig ~/.kube/karmada.config --context karmada-host edit deploy/karmada-scheduler -n karmada-system

...

spec:

automountServiceAccountToken: false

containers:

- command:

- /bin/karmada-scheduler

- --kubeconfig=/etc/kubeconfig

- --bind-address=0.0.0.0

- --secure-port=10351

- --enable-scheduler-estimator=true

- --v=4

image: ## Your Image Address

...

When you start the scheduler, you can find that TestFilter plugin has been enabled from the logs:

I0408 12:57:14.563522 1 scheduler.go:141] karmada-scheduler version: version.Info{GitVersion:"v1.9.0-preview5", GitCommit:"0126b90fc89d2f5509842ff8dc7e604e84288b96", GitTreeState:"clean", BuildDate:"2024-01-29T13:29:49Z", GoVersion:"go1.20.11", Compiler:"gc", Platform:"linux/amd64"}

I0408 12:57:14.564979 1 registry.go:79] Enable Scheduler plugin "ClusterAffinity"

I0408 12:57:14.564991 1 registry.go:79] Enable Scheduler plugin "SpreadConstraint"

I0408 12:57:14.564996 1 registry.go:79] Enable Scheduler plugin "ClusterLocality"

I0408 12:57:14.564999 1 registry.go:79] Enable Scheduler plugin "ClusterEviction"

I0408 12:57:14.565002 1 registry.go:79] Enable Scheduler plugin "APIEnablement"

I0408 12:57:14.565005 1 registry.go:79] Enable Scheduler plugin "TaintToleration"

I0408 12:57:14.565008 1 registry.go:79] Enable Scheduler plugin "TestFilter"

Config the plugin

You can config the plugin enablement by setting the flag --plugins.

For example, the following config will disable TestFilter plugin.

$ kubectl --kubeconfig ~/.kube/karmada.config --context karmada-host edit deploy/karmada-scheduler -nkarmada-system

...

spec:

automountServiceAccountToken: false

containers:

- command:

- /bin/karmada-scheduler

- --kubeconfig=/etc/kubeconfig

- --bind-address=0.0.0.0

- --secure-port=10351

- --enable-scheduler-estimator=true

- --plugins=*,-TestFilter

- --v=4

image: ## Your Image Address

...

Configure Multiple Schedulers

Run the second scheduler in the cluster

You can run multiple schedulers simultaneously alongside the default scheduler and instruct Karmada what scheduler to use for each of your workloads.

Here is a sample of the deployment config. You can save it as my-scheduler.yaml:

apiVersion: apps/v1

kind: Deployment

metadata:

name: my-karmada-scheduler

namespace: karmada-system

labels:

app: my-karmada-scheduler

spec:

replicas: 1

selector:

matchLabels:

app: my-karmada-scheduler

template:

metadata:

labels:

app: my-karmada-scheduler

spec:

automountServiceAccountToken: false

tolerations:

- key: node-role.kubernetes.io/master

operator: Exists

containers:

- name: karmada-scheduler

image: docker.io/karmada/karmada-scheduler:latest

imagePullPolicy: IfNotPresent

livenessProbe:

httpGet:

path: /healthz

port: 10351

scheme: HTTP

failureThreshold: 3

initialDelaySeconds: 15

periodSeconds: 15

timeoutSeconds: 5

command:

- /bin/karmada-scheduler

- --kubeconfig=/etc/kubeconfig

- --bind-address=0.0.0.0

- --secure-port=10351

- --enable-scheduler-estimator=true

- --leader-elect-resource-name=my-scheduler # Your custom scheduler name

- --scheduler-name=my-scheduler # Your custom scheduler name

- --v=4

volumeMounts:

- name: kubeconfig

subPath: kubeconfig

mountPath: /etc/kubeconfig

volumes:

- name: kubeconfig

secret:

secretName: kubeconfig

Note: For the

--leader-elect-resource-nameoption, it will bekarmada-schedulerby default. If you deploy another scheduler along with the default scheduler, this option should be specified and it's recommended to use the scheduler name as the value.

In order to run your scheduler in Karmada, create the deployment specified in the config above:

kubectl --context karmada-host create -f my-scheduler.yaml

Verify that the scheduler pod is running:

kubectl --context karmada-host get pods --namespace=karmada-system

NAME READY STATUS RESTARTS AGE

....

my-karmada-scheduler-lnf4s-4744f 1/1 Running 0 2m

...

You should see a "Running" my-karmada-scheduler pod, in addition to the default karmada-scheduler pod in this list.

Specify schedulers for deployments

Now that your second scheduler is running, create some deployments, and direct them to be scheduled by either the default scheduler or the one you deployed. In order to schedule a given deployment using a specific scheduler, specify the name of the scheduler in that propagationPolicy spec. Let's look at three examples.

- PropagationPolicy spec without any scheduler name

apiVersion: policy.karmada.io/v1alpha1

kind: PropagationPolicy

metadata:

name: nginx-propagation

spec:

resourceSelectors:

- apiVersion: apps/v1

kind: Deployment

name: nginx

placement:

clusterAffinity:

clusterNames:

- member1

- member2

When no scheduler name is supplied, the deployment is automatically scheduled using the default-scheduler.

- PropagationPolicy spec with

default-scheduler

apiVersion: policy.karmada.io/v1alpha1

kind: PropagationPolicy

metadata:

name: nginx-propagation

spec:

schedulerName: default-scheduler

resourceSelectors:

- apiVersion: apps/v1

kind: Deployment

name: nginx

placement:

clusterAffinity:

clusterNames:

- member1

- member2

A scheduler is specified by supplying the scheduler name as a value to spec.schedulerName.

In this case, we supply the name of the default scheduler which is default-scheduler.

- PropagationPolicy spec with

my-scheduler

apiVersion: policy.karmada.io/v1alpha1

kind: PropagationPolicy

metadata:

name: nginx-propagation

spec:

schedulerName: my-scheduler

resourceSelectors:

- apiVersion: apps/v1

kind: Deployment

name: nginx

placement:

clusterAffinity:

clusterNames:

- member1

- member2

In this case, we specify that this deployment should be scheduled using the scheduler that we deployed - my-scheduler.

Note that the value of spec.schedulerName should match the name supplied for the scheduler in the schedulerName field of options in the second schedulers.

Verifying that the deployments were scheduled using the desired schedulers

In order to make it easier to work through these examples, you can look at the "Scheduled" entries in the event logs to verify that the deployments were scheduled by the desired schedulers.

kubectl --context karmada-apiserver describe deploy/nginx